Don’t Measure Skills at the End. Design for Measurement at the Start.

Most skill measurement conversations happen too late.

After the training launches.

After the rollout deck.

After the participation numbers come in.

Then someone asks:

“Can you provide an update on the program?”

Which usually translates to:

How many people attended?

How many completed it?

What were the satisfaction scores?

Not because that’s what matters most.

But because that’s what we’ve trained leaders to look for.

And at that point, you cannot provide more than activity.

Attendance.

Completion rates.

Survey sentiment.

Activity is not capability.

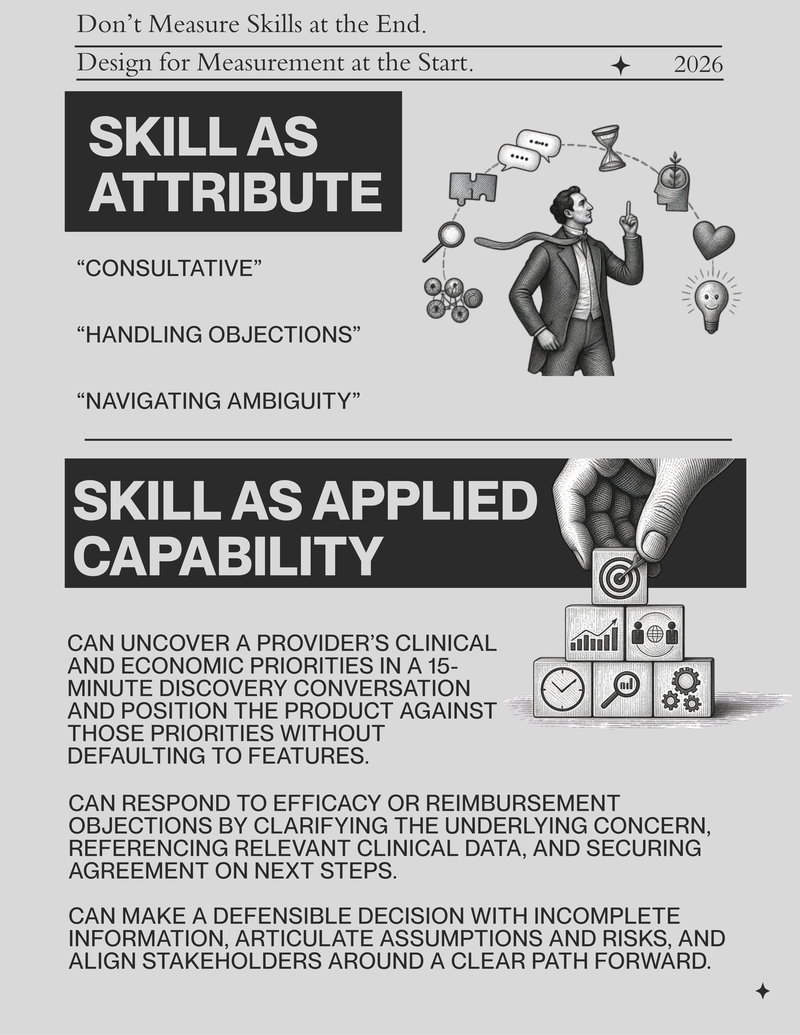

If a skill is defined as applied capability in real work, measurement cannot be an afterthought.

It must be part of the definition.

This is where the shift becomes structural.

If collaboration is required to build skills, it must be required in the design of skill development.

And if performance impact is required in the outcome, it must be required in the definition.

Measurement is not something L&D should own alone.

It is something L&D and the business co-design and co-own.

---------------------------------------------

Sidebar: Industry Research Insight

Industry research from Deloitte and Gartner reinforces this shift toward performance-linked capability models. Organizations that tie skill development to defined business outcomes consistently outperform those that measure participation alone.

Conclusion: The point is not more metrics. The point is better questions.

------------------------------------------------

Here’s what changes when you treat measurement as a front-end strategy instead of a back-end report.

You stop reporting:

“Field reps completed the certification.”

You start defining at the outset:

“What should happen to close rates in complex deals if this capability improves?”

You stop reporting:

“Satisfaction scores were high.”

You start defining at the outset:

“What decision behavior should improve if this skill strengthens?”

You stop reporting:

“Managers attended the leadership workshop.”

You start defining at the outset:

“What should change in the cadence and quality of 1:1s if leadership capability improves?”

You stop measuring exposure.

You define the effect before you build the program.

Then you design for it.

And measure it against real performance.

So how do you do that?

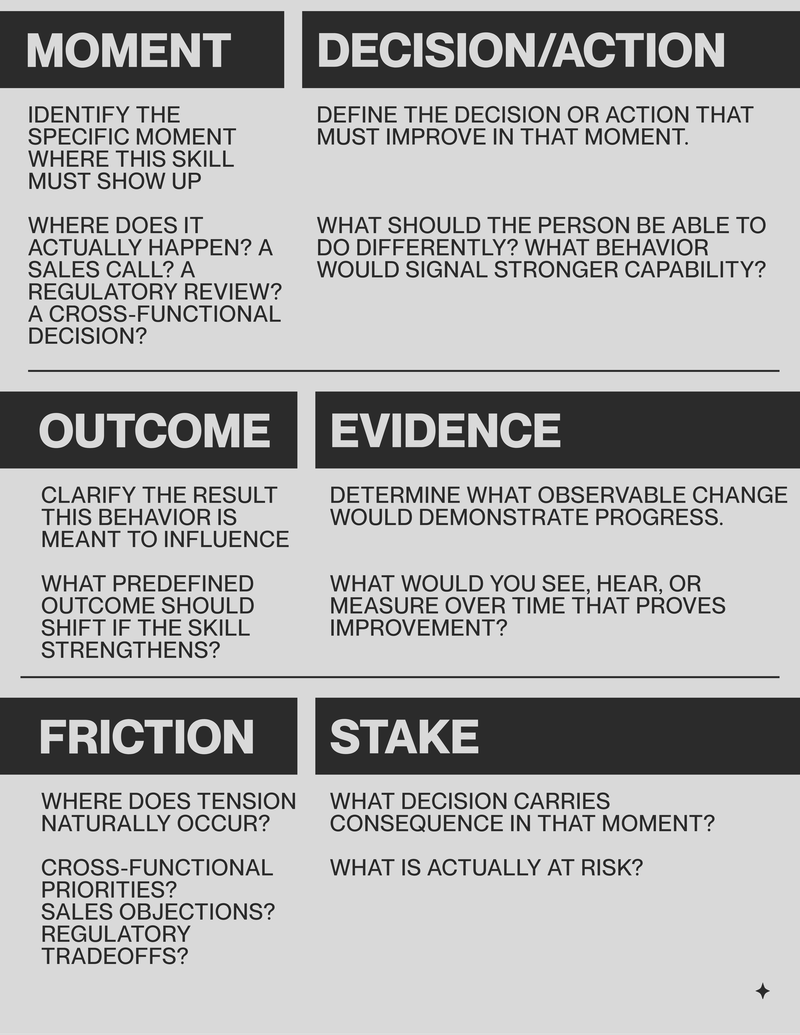

The Performance Impact Alignment Check

Ask these during the needs assessment:

- What specific performance moment should improve?

- What observable behavior signals improvement in that moment?

- What business metric or indicator should move if that behavior strengthens?

- Who will observe and validate that shift over time?

If you cannot answer these upfront, measurement will default to participation.

Now comes the harder part.

Measurement must be shared.

Not reported by L&D after the fact.

Designed together at the start.

The Shared Measurement Model

Shared ownership. Defined upfront.

- L&D defines the skill in behavioral terms.

- The business defines the performance outcome that must improve.

- Managers observe and coach in context.

- Employees practice and refine.

Measurement becomes a distributed system, not a dashboard.

And yes, this changes L&D’s identity.

L&D is no longer the “owner” of learning.

Employees own their development.

Managers reinforce it.

The business defines the performance outcomes.

L&D enables the system.

And measurement no longer sits in one place.

Managers observe behavior in real time.

The business tracks the performance outcomes those behaviors influence.

L&D integrates the data, identifies patterns, and adjusts the system.

No single function owns the number.

They co-own the result.

That alignment is what makes measurement meaningful.

Because when skill development is real, something changes.

Decisions are clearer.

Conversations are shorter.

Tradeoffs are sharper.

Outcomes improve in ways you can see.

That is when skill development becomes measurable capability.

Anything else is activity without accountability.